The title is just an attempt to be catchy and has nothing to do with Hashicorp co-founder Mitchell Hashimoto other than Terraform being a Hashicorp product.

I have noticed a disturbing trend within the Terraform community beginning with Yevgeniy Brikman’s, “Terraform Up & Running,” although it could predate the material. Monorepos! I don’t recall if Brikman called them monorepos, or anything for that matter, I just recall the practice.

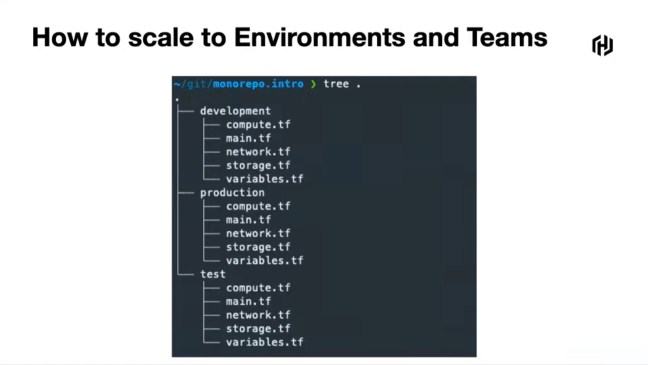

What is a Monorepo?

A Monorepo is a single repository that stores the source code for multiple environments, like Development, Staging, and Production. It was recently discussed in the Hashiconf Europe talk, “Using a Monorepo with Terraform Cloud”. Within the repo, it is organized with separate directories for each environment and the code is copied from one to the other.

Why is this madness?

Infrastructure as Code is the practice of using DevOps tooling and paradigms and applying them to infrastructure. Simply put, we have configuration code and we do all the DevOps stuff to it. This should mean many things and they should be understood:

- Version control

- Small releases

- Testing

- Automation

- and more

While automated testing with a unit testing framework has not been widely adopted within the Terraform community, it is coming. It also means that if we do not have such a framework implemented, then we need to be certain to do everything else with best practices. For emphasis, I will rephrase; if you deviate from one best practice, this is not an excuse to deviate from more best practices, it is a reason to double down on them.

Automated testing has been assisted with Terratest (from Brikman’s Gruntwork.io), Terraform Kitchen, etc. In addition, other testing frameworks could be used to validate the infrastructure has been deployed as expected, like Pester for Powershell. And to be clear, testing is important. One of my first uses of Terraform was to deploy an Azure App Service Plan and I defined it to use Linux rather than Windows. The Terraform code successfully executed and the resources were deployed. When I looked at the results in the portal, I noticed that the App Service Plan was deployed with Windows instead of Linux. Things like this happen; this is why we test. It is not sufficient to call it “good” when Terraform does not have errors.

Automated testing is fantastic because when we make changes to the code, it gets tested. If we specify a new release of Terraform, it gets tested. This is the value of automation. As people, we get bored or complacent and we can convince ourselves that testing this time is unnecessary, everything will be fine because it was fine the last ten times. In addition, that is time and time is money.

What does this have to do with Monorepos?

If you have separate code for Development, Staging, and Production, how did you test the code that deployed Production? If your answer is that you tested the Development code in Development, copied it to Staging, tested it in Staging, and copied the code to Production, you did not test the code that was used to deploy to Production. How do you know that you properly copied the code? Why is it even copied in the first place? If it is the same code, why not use the same code? This is why we practice making reusable code.

As trivial as it seems to copy code, it can cause horrible outcomes. In the mid-2000s, I worked for an Application Service Provider (ASP), which was a forerunner to Cloud Computing. We hosted an application that our company developed for our customers. The code was written in a 4GL platform called Progress and the application was originally written for SCO Unixware, was ported to Linux, and during the latest effort was ported to running on new Windows servers. We had a small support staff including a single help desk member, myself as the Systems/Network Admin, and the CTO. We were supporting multiple deployments of the application and many other things for our customers (like hosting Exchange, file & print servers, and managing their Active Directory environments). I do not recall how many users in total, but it was well into 5-figures which was quite a bit for a small staff.

During this major effort to port to Windows, code changes were frequent and things were finally ready to have a customer moved. As expected, there were issues and bugs were fixed. However, something kept happening. Calls would continue to come in for new issues after the code was deployed. One of the developers would come and work through the issue. Time and time again, the issue was that not all of the code was deployed. There would be missing libraries or executables and things would fall flat for the users. Copying code is not as trivial as you expect, especially when there is not some tool verifying that all was copied well.

Monorepos are anti-pattern

Bottom line. Code should be written to be reused. Another poor practice that is often paired here, since it is copied code, is the use of feature flags to differentiate between environments. Why? This is code and the logic works in one environment and not another? How do you test Production when you deploy to Staging and the feature flag says not to do the Production things because this is Staging?

Use the exact same code in each environment or you did not test it. Most of the emphasis has been on automated testing, but we know this is not commonly done in the Terraform community. First, it should be. Second, if it is not done, then this means manual testing. How is manual testing performed? Make a checklist of the items to verify and verify them. Is the correct SKU deployed? Are the proper resources deployed? Are the permissions established as expected? These things and many more are what should be verified. If it is not automated, this is even more important, not less.

You cannot test one instance, copy the code with feature flags, and expect that it will behave as declared because you did not verify it.

Other issues with Monorepos

Committing to a repository is an event. These events are used to trigger CI/CD pipelines, if they are implemented. If code is committed for Development, how does the repository know that it was only Development code that was changed and any action that is taken should not be performed in Production? Mostly because the code for Production did not change; it will still likely run, but there will not be a change to implement. Except, to pull all of this off, logic was introduced in to the pipeline. Logic is code. Code should be tested.

Something seems to keep creeping in here.

How should this addressed?

A single repository with a single set of code. This should be deployed into a Development environment and verified (ideally walked through automated tests). Any particulars about a deployment that may need to be different should be handled through input variables. Development should have input variables. The code should be committed.

The commit should trigger the pipeline and it should be set to execute in a Staging environment where the appropriate variable values should be provided. Everything should be verified and another good practice would be to burn in (see if issues arise over time) and perhaps even load tested. Once this stage is completed, then it can move to Production, which will have different input variables.

If these input variables have anything to set the environment other than components of a naming convention or tagging, then the feature flags are probably being used. The values of the input variables are another debatable concept for testing. If you want proper testing, the SKUs and resource allocations would ideally be the same. If the application is multi-tiered and scaled horizontally, then it would be great to know that it can scale to five nodes if production is expected to scale to five nodes. This does raise some challenges, however. It is likely that paying for production to operate 24×7 in Staging is not desirable. One option would be to deploy like Production, complete the testing, and then fall back to lower cost SKUs and scale down. Another option would be to have yet another environment. Perhaps a Testing environment is for the steady state “like production” but cheaper so any issues can be reproduced there, but then Staging is identical to Production and destroyed after sufficient testing.

Terraform Cloud Workspaces

Workspaces in Terraform Cloud can contribute to this by managing the multiple states for each environment. This is a great practice.

There is a feature that does allow executions to only run if a change happens within a certain subdirectory of a repository; this supports an anti-pattern. Just because the feature exists does not mean it is a good practice to use it. I am sure that Hashicorp implemented it because many customers requested something like this to address some of the issues related to monorepos. The better solution is to eliminate the monorepos.

Brikman, Yevgeniy. Terraform Up & Running. O’Reilly, 2019.

Using a Monorepo with Terraform Cloud. Patrick Carey, Hashiconf Europe 2021, https://youtu.be/4Rlwh4YVLRY. Accessed July 29, 2021.